Pyramids, ice cream cones, crumbs, pancakes and trophies

Here’s another post in my occasional series I’m calling Diagrams in Software Engineering I Find Somewhat Problematic.

Today: the testing pyramid.

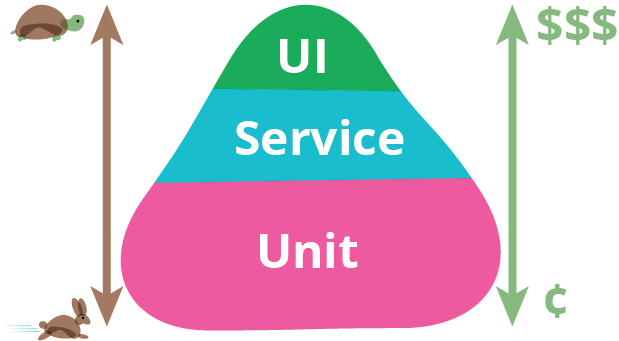

Here is the testing pyramid diagram from Martin Fowler’s article on the subject:

The key points portrayed in this diagram are:

- End-to-end / system / UI tests are slower and more expensive to run and maintain than unit tests.

- Because of this, you should focus testing efforts on unit tests, and be judicious with your use of end-to-end tests.

- Integration / service tests are useful to test that parts of the system work together without the overhead of a full system test.

Not everybody agrees with this viewpoint.

In particular, the ‘service’ or ‘integration’ test layer is the subject of much discussion.

Justin Searls argues that you should avoid integration tests in rich web applications, and advocates starting at each end of the pyramid so that your tests are either all-encompassing system tests or highly isolated unit tests.

On the other hand, Mike Cohn makes the case for non-UI service-layer tests, arguing that they can give you most of the benefits of full UI tests, but without the downsides.

Similarly, Kent Dodds is a big fan of integration testing in front end JavaScript applications, because it avoids the perils of too much mocking. He even came up with the concept of the ‘test trophy’ pattern to illustrate it.

On the subject of mocks, Eric Elliott has written an excellent post about how lots of mocks in tests are a code smell, and gives some advice for reducing them, which essentially boils down to decoupling and extracting smaller modules, using pure functions, isolating side-effects such as I/O (network access, rendering), and making more use of declarative code.

So you can see how the balance of testing types can depend on your coding style, team make-up, the nature of the project, the libraries you use and so on. For me, the test pyramid is too prescriptive. Personally, I’m more in agreement with Justin Searls than I am with Kent Dodds on this one.

Having said that, the two biggest automated testing anti-patterns I still see in teams are:

- Testing (almost) nothing (the testing ‘plate of crumbs’)

- Trying to test everything in the same way (often an ‘ice cream cone’ or ‘pancake’ pattern)

This boils down to one problem: not using automated testing intentionally as a balanced way of managing risk and facilitating change.

It takes time and continuous improvement to grow and adapt a test suite that can both reduce the risk of change, and that doesn’t also prevent a team making change rapidly because of its cost overheads.

The key to this for me is to monitor and maintain your test suite with the same vigilance as you reserve for production code. If it’s a burden, you need to do something about it. If it isn’t helping you manage the risk of change, work out why.

What shape is your test suite? Is it working for you?

All the best,

– Jim